Plants, maybe. Fungi, hell no.

AI + fungi = you die

Actually I use it as a starting point for fungi. Seek will usually get me to the genus, and from there I can cross reference various books to narrow it down. Hell, sometimes it’ll give me an exact match, and then I just have to perform a yes or no ID with my field guides. That being said, I mostly end up with no, I’m shit scared of all amanitas and most mushrooms just aren’t tasty enough to warrant the effort.

I have heard that spore prints are a reliable way of determining mushroom species (removing the stem, putting the underside of the mushroom on an ink pad, pressing against paper, and comparing the print with those of known species).

I bet an AI could analyze that data pretty well. But since there’s really no market for such a product, if I want it, I would have to make it myself. In which case I highly advise against using it because I really don’t trust me.

There is no ink involved, the spores make the print.

https://doubleblindmag.com/mushrooms/how-to-grow-mushrooms/how-to-make-a-spore-print/

Ah, thanks for the correction, never done it myself and learned about it a long time ago so I’m not surprised I remembered it wrong.

Fungi is literally fun tho. Mostly to see how wrong that fucker is. It’s just as wrong as me

That’s essentially what the Flood is in Halo

I don’t actually know if it’s considered a deepfake when it’s just a voice; but I’ve been using the hell out of Speechify, which basically deepfakes voices and pairs them with a text input.

…so… nursing school, we have an absolute fuck-ton of reading assignments. Staring at a page of text makes my brain melt, but thankfully nowadays everything’s digital, so I can copy entire chapters at a time, and paste them into Speechify. Now suddenly I have Snoop-dogg giving me a lecture on how to manage a patient as they’re coming out of general anesthesia. Gets me through the reading fucking fast, and it retains so, SO much better than just trying to cram a bunch of flavorless text.

Speechify also pays the people who’s voices they’re using rather than taking them from publicly available videos and recordings without permission.

That’s also the business model behind ad localization now, they’ll pay the actor once for appearing on set and then pay them royalties to keep AI editing the commercial to feature different products in different countries.

If they’re up front about it and if the actor agrees to it (as with Speechify), I don’t see a problem with that. SAG should also be involved to try and determine fair compensation.

Wait that’s genius. I would listen to Snoop Dogg teaching me particle physics any day of the week.

I think the key here is you’re using it for yourself only.

I think it comes down more to understanding what the tech is potentially good at, and executing it in an ethical way. My personal use is one thing; but Speechify made an entire business out of it, and people aren’t calling for them to be burned to the ground.

As opposed to Google’s take of “OMG AI! RUB IT INTO EVERYONE’S NOSE, THEY’RE GONNA LOVE IT!” and just slapping it onto the internet, and then pretending to be surprised when people ask for a pizza recipe and it tells them to add Elmer’s Glue to it…

Two controlled inputs giving a predictable output; vs just letting it browse 4chan and see what happens. The tech industry definitely seems to lean toward the later, which is fucking tragic, but there are gems scattered throughout the otherwise pure pile of shit that LLMs are at the moment.

In my opinion using someone’s voice without their consent in a public way is unethical, but you doing it in private doesn’t hurt anyone.

Say that again, but think of a a fat old white dude jerking off to what he’s created, and you’ll figure out several ways it could hurt someone.

Do not use ai for plant identification if it actually matters what the plant is.

Just so ppl see this:

DO NOT EVER USE AI FOR PLANT IDENTIFICATION IN CASES WHERE THERE ARE CONSEQUENCES TO FAILURE.

For walking along and seeing what something is, that’s fine. No big deal if it tells you something’s a turkey oak when it’s actually a pin oak.

If you’re gonna eat it or think it might be toxic or poisonous to you, if you want to find out what your pet or livestock ate, if you in any way could suffer consequences from misidentification: do not rely on ai.

You could say the same about a plant identification book.

It’s not so much that AI for plant identification is bad, it’s that the higher the stakes, the more confident you need to be. Personally, I’m not going foraging for mushrooms with either an AI-based plant app or a book. Destroying Angel mushrooms look pretty similar to common edible mushrooms, and the key differences can disappear depending on the circumstances. If you accidentally eat a destroying angel mushroom, the symptoms might not appear for 5 to 24 hours, and by then it’s too late. Your liver and kidney are already destroyed.

But, I think you could design an app to be at least as good as a book. I don’t know if normal apps do this, but if I made a plant identification app, I’d have the app identify the plant, and then provide a checklist for the user to use to confirm it for themselves. If you did that, it would be just like having a friend just suggest checking out a certain page in a plant identification book.

The problem with AI is that it’s garbage in, garbage out. There’s some AI generated books on Amazon now for mushroom identification and they contain some pretty serious errors. If you find a book written by an actual mycologist that has been well curated and referenced, that’s going to be an actually reliable resource.

Are you assuming that AI in this case is some form of generative AI? I would not ask chatgpt if a mushroom is poisonous. But I would consider using a convolutional neural net based plant identification software. At that point you are depending on the quality of the training data set for the CNN and the rigor put into validating the trained model, which is at least somewhat comparable to depending on a plant identification book to be sufficiently accurate/thorough, vs depending on the accuracy of a story that genAI makes up based on reddit threads, which is a much less advisable venture

The books on Amazon are vomited out of chat GPT. If there’s a university-curated and trained image recognition AI, that’s more likely to be reliable provided the input has been properly vetted and sanitized.

If you’re using the book correctly, you couldn’t say the same thing. Using a flora book to identify a plant requires learning about morphology and by having that alone you’re already significantly closer to accurately identifying most things. If a dichotomous key tells you that the terminating leaflet is sessile vs. not sessile, and you’re actually looking at that on the physical plant, your quality of observation is so much better than just photographing a plant and throwing it up on inaturalist

Not to mention, the book is probably going to list look-alike plants, and mention if they are toxic. AI is just going to go “It’s this thing”.

You can easily say the same thing. Use the image identification to get a name of the plant and google it to read about checking if the sessile is leafy or no.

The difference between a reference guide intended for plant identification written and edited by experts in the field for the purposes of helping a person understand the plants around them and the ai is that one is expressly and intentionally created with its goal in mind and at multiple points had knowledgeable skilled people looking over its answer and the other is complex mad libs.

I get that it’s bad to gamble with your life when the stakes are high, but we’re talking about the difference between putting it on red and putting it on 36.

One has a much, much higher potential for catastrophe.

sorry couldn’t hear you over the CRUNCHING OF MY MEAL

I have a feeling I know where your username came from.

Forgo identification and eat the plant based on vibes like our ancestors.

Like I get what you’re saying but this is also hysterical to the point that people are going to ignore you.

Don’t use AI ever if there are consequences? Like I can’t use an AI image search to get rough ideas of what the plant might be as a jumping off point into more thorough research? Don’t rely solely on AI, sure, but it can be part of the process.

The blanket term “AI” has set us back quite a lot I think.

The plant thing and the deepfakes/search engines/chatbots are two entirely different types of machine learning algorithm. One focussed on distinguishing between things, the other focussed on generating stuff.

But “AI” is the marketable term, and the only one most people know. And so here we are.

I hate when streamers/gamers/etc refer to procedural generation as “ai generated”. It’s infuriating.

I particularly “Love” that a bunch of like, procedural generation and search things that have existed for years are now calling themselves “AI” (without having changed in any way) because marketing.

Reminds me of how everything on a computer used to be a “program”, but now they’re all just “apps”

I will die on the hill of calling them computer programs.

Same. It’s like the one thing where I am a total boomer on

My pet theory is that Apple started to use that term because “App” can also be short for “Apple”.

I buy it

It just works

What’s a computer?

I read a story on CBC the other day that was all about how an AI voice was taking over from hosts on off-hours at some local radio station, then deeper in the article it revealed that everything the “AI” reads was written by a human. So it was about someone using text-to-speech technology that has been around since at least the 70s the whole time. Hardly newsworthy in any way except for “IT’S AI!”

Mind you there -are- TTS tools that use machine learning (which is what advertisers call “AI” now) for more realistic voices. No idea if the radio was using those at all though.

Oh man this one drives me up the wall too.

Someone literally with a straight face said how cool Minecraft has AI generated worlds and I wanted to flip a table.

You’re talking about types of machine learning algorithms. Is that a more precise term that should be used here instead of AI? And would the meme work better if it wss used. I’m asking, because I really don’t understand these things.

There are proper words for them, but they are ~technical jargon~. It is sufficient to know that they are different types of algorithm, only really similar in that both use machine learning.

And would the meme work better if it wss used

No because it is a meme, and if people had learned the proper words for things, we wouldn’t need a meme at all.

Both use machine learning algorithms that are modelled off the behaviour of neurons.

They are still different algorithms but they’re not that wildly different in the grand scale of the field of machine learning.

deleted by creator

The stuff people don’t like is generative AI

I suppose both plantnet and deep fakes have conv networks as part of their architectures though

Likely transformers now (I think SD3 uses a ViT for text encoding, and ViTs are currently one of the best model architectures for image classification).

It’s particularly annoying because those are all AI. AI is the blanket term for the entire category of systems that are man made and exhibit some aspect of intelligence.

So the marketing term isn’t wrong, but referring to everything by it’s most general category is error prone and makes people who know or work with the differences particularly frustrated.

It’s easier to say “I made a little AI that learned how I like my tea”, but then people think of something that writes full sentences and tells me to put dogs in my tea. “I made a little machine learning based optimization engine that learned how I like my tea” conveys it much less well.AI is the new flavor, just like 2.0, SIM-everything, VIRTUAL-everything, CYBER -everything, were before. Eventually good use cases will emerge, and the junk will be replaced by the next buzzword.

Good use cases for AI already exist

And I’m saying this as a certified hater of GenAI

Machine Learning as an invention has already been used for good, useful things. It’s just that it never got caught up in hype like the modern wave of Generative Transformers (which is apparently the proper term for those overhyped chatbots and picture generators)

I am a physicist. I am good at math, okay at programming, and not the best at using programming to accomplish the math. Using AI to help turn the math in my brain into functional code is a godsend in terms of speed, as it will usually save me a ton of time even if the code it returns isn’t 100% correct on the first attempt. I can usually take it the rest of the way after the basis is created. It is also great when used to check spelling/punctuation/grammar (so using it like the glorified spellcheck it is) and formatting markup languages like LaTeX.

I just wish everyone would use it to make their lives easier, not make other people’s lives harder, which seems to be the way it is heading.

With all the hot takes and dichotomies out there, it would be nice if we could have a nuanced discussion about what we actually want from AI right now.

Not all applications are good and not all are bad. The ideas that you have for AI are so interesting, I wish we could just collect those. Would be way more helpful than all the AI shills or haters rn.

nuanced discussion about what we actually want from AI right now.

👆

So on Bluesky, the non-free almost-Twitter Twitter replacement, as anti-AI as X-Twitter is pro: you see extreme anti-AI sentiment with zero room for any application of the tech, and I have to wonder if defining the tech is part of the problem.

They do want Gmail to filter spam, right?

They don’t hate plant ID apps, do they?

I’m guessing they mean “I don’t need ChatGPT, which was enabled by theft, and I don’t want chatbots in other apps either.”

But they come out saying effectively “don’t filter spam!” the way they talk. At least arguably: not like every expert in the field would use the exact same definition, but still I doubt the average absolutist is fully aware what their message may come across as.

Also works well for the opposite use case.

I’m a good programmer but bad at math and can never remember which algorithms to use so I just ask it how to solve problem X or calculate Y and it gives me a list of algorithms which would make sense.

Yeah I’ve been using it to help my novice ass code stuff for my website and it’s been incredible. There’s some stuff I thought yeah I’m probably never gonna get around to this that I rocketed through in an AFTERNOON. That’s what I want AI for. Not shitty customer service.

Great examples. The most valuable use for me has been writing SQL queries. SQL is not a part of my job description, but data informs choices I make. I used to have to ask a developer on my team all the questions I had and pull them off their core work to get answers for me, then I had to guess at interpreting the data and inevitably bug them again with all my follow-up questions.

I convinced the manager to get me read access to the databases. I can now do that stuff myself. I had very basic understanding of SQL before, enough to navigate the tables and make some sense of reading queries, but writing queries would have taken HOURS of learning.

As it is, I type in basics about the table structure and ask my questions. It spits out queries, and I run them and tweak as needed. Without AI, I probably would have used my SQL access twice in the past year and been annoyed at how little I was able to get, but as it is I’ve used it dozens of times and been able to make better informed decisions because of it.

“AI renaming image files for easier search”

You’re cool, though, you can stay

Alphafold? Beautiful. Perfect. No notes.

Nudeify type apps? Fuuuuck alllll the way off!

We’re in that awkward part of AI where all the degenerates are using it in unethical ways, and it will take time for legislation and human culture to catch up. The early internet was a wild place too.

I still don’t see how AI-generated porn is any different from photoshopping someone’s face on to someone else’s naked body.

It’s less effort and typically more realistic (in the sense that it looks more real, not that it is)

But it’s unethical either way, don’t make non-consensual porn

I don’t know much about AI porn but I see a huge difference between putting a real person’s face on a real person’s nude body and putting an imaginary face on an imaginary nude body.

The difference is that plant identification is a classification problem, not an LLM.

Not all of AI is LLMs, most aren’t.

I think state machines are cool and groovy. I still don’t understand genetic algorithms but I wish I did.

15 years ago we were all saying “AI is just a series of IF statements” because of expert systems and y’all forgot

Genetic algorithms kinda suck as they use random variations and breeding to solve a problem which is much slower than using backpropagation with any decent reward modeler. It’s the difference between selective breeding and gene splicing in the real world.

The most annoying thing since the rise of LLMs is that everyone thinks that all of AI is just LLMs

Classification machine learning models can also be neural networks, which is something that was called AI also

Yeah I used to think that reinforcement learning would be trending. But hey, maybe next time.

AI isn’t just about LLM. Modern AI libraries (pytorch, tensorflow etc.) can be used for being trained with all sorts of data.

Some customer support “bots” could be considered classification problems, no? At least in so far as which department does a call get routed to.

At least it’s routing you to a department instead of trying to help you solve the issue yourself by showing you different help pages you already looked at before trying to contact support.

If you actually looked at the help pages before contacting support, you are in the minority.

Could be. Classification is a type of problem. LLM is a type of model. You can use LLMs to solve classification problems. There’s a good chance that’s what’s happening here.

upscaling of old media is pretty cool too

Depends.

Old, niche videogames where the fanbase doesn’t have the capacity to do it? Sure. James Cameron replacing Arnold with a UHD leather Muppet in True Lies? Not so much.

I would watch that remake though

Dammit, I made the prospect sound too much like fun. :/

I would watch an all-Muppet True Lies remake!

Upscayl app does this quite well without chagpt ai stuff

I’ve had to literally perform a Google search to find a customer support phone number before. Because the website of the company just kept redirecting me in circles.

Their phone support was just as useless, though.

It was GameStop, by the way.

Gethuman.com is my go-to. They used to be much better than they are now, but it’s still routinely better than trying to navigate automated systems or find phone numbers myself

We need to strike back with an AI customer which alerts us if we could finally talk or chat again with a human if all automatic solutions are discussed.

I’ve just taken to randomly pressing buttons until it gives me a human.

I’ve had automated systems just straight up hang up on me when I ask for a customer service representative instead of actually linking me to one. Because it says it “couldn’t understand me”.

If i won the lottery, id invest in that.

Fuck YES

Using it for plant identification is fine as long as it’s an AI designed/trained for plant ID (even then don’t use it to decide if you can eat it). Just don’t use an LLM for plant ID, or for anything else relating to actual reality. LLMs are only for generating plausible-sounding strings of text, not for facts or accurate info.

Best and easiest way is to reverse image search from a photo, it’s easy to look through the results for yourself and see what actually matches (it’s frequently not the first search result). Perhaps there’s some kind of AI involved in reverse image search, but searching like this is infinitely preferable to me instead of some bot telling me an answer which may or may not be correct. It’s not “convenient” if you actually care about the answer.

Tbh anything that can give you a curated set of options, and some resources that can help you make the final decision is pretty incredible. But that’s the thing about most AI - it needs some human vetting for good results, regardless of how powerful it is

Mushroom identification is extra flaky.

Expert level mushroom identification is a skill and I wouldn’t recommend anyone use those apps and assume a mushroom is correct. (And especially don’t eat it without absolutely verifying)

Plant identification - not concerned. Most normal people aren’t ingesting a plant in the wild. And I mean if you’re rubbing against a plant and get a reaction, ideally that’s a lesson you only learn once.

First time I’ve seen this meme template. Love it.

Sign up with iNaturalist for plant and animal identification! Citizen science is good for you.

The button to take a photo of a plant/animal?

“Observe”

Hold up gang, I need to observe this species right quick

Instant scientist cred

hmm guess which one also doesn’t suck the energy equivalent of a sizeable town

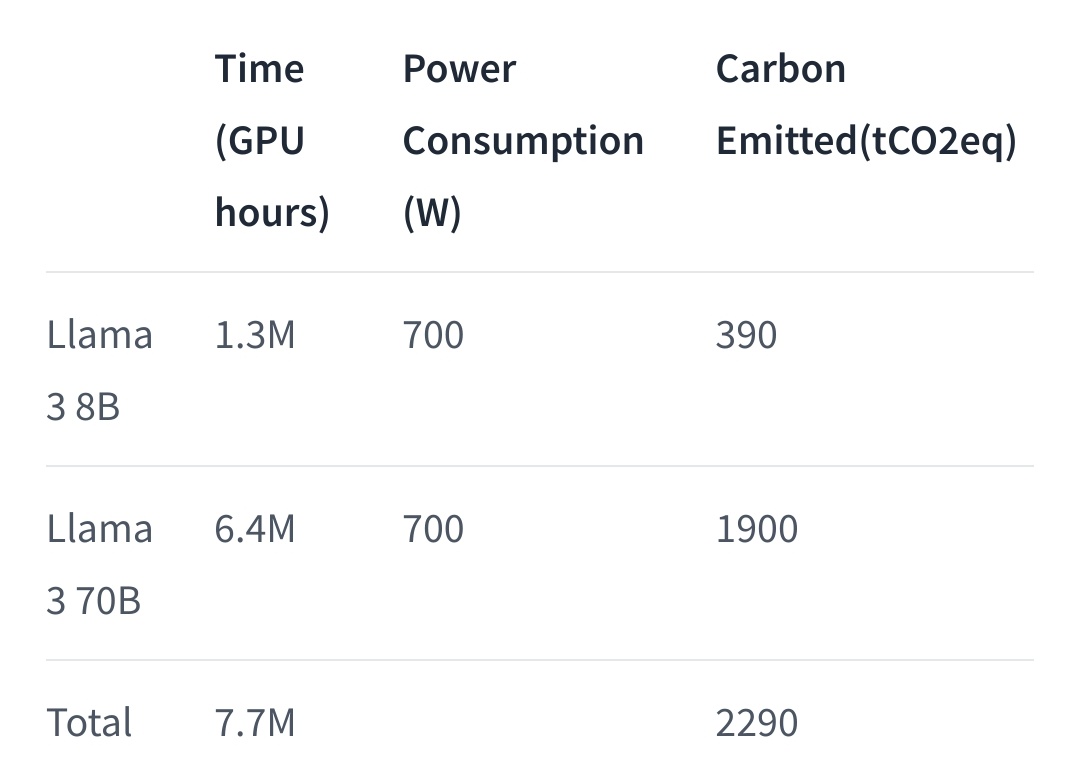

I’d like all ai service to publish the energy used in training the model and performing inference.

“Queries uses an average of X kWh of power. A model training run requires X MWh, and the development of this model over the years required X TWh of power.”

Then we could judge companies by that metric. Off course, rich people would look for the most power-draining model for the sake of it.

That’s already something that Meta is doing for their Llama models:

You can extrapolate openai models consumption from these I guess

ok, but

-

Is it still bad if they use renewables? in which case, it’s not horrendous, is it?

-

what about the rest of their servers?

-

Fuck facebook

If we are abundant in renewable energy no but if we are still at a level where available renewable energy can be used to replace non-renewable, then AI tech needs to justify its use cases too.

and servers yes, social media related data center energy consumption should be put under heavy scrutiny too. Especially considering some energy hungry social media platforms like facebook are lately causing more harm than good (on other fronts such as political propaganda and racism). I doubt any of these are gonna happen soon though since many governments are heavily invested in using social media and LLM chatbots for propaganda and surveillance.

We already have a way for society to decide what is and isn’t worth spending power and effort on and it’s called money.

Increase carbon taxes to incentivize clean fuel sources and ban predatory advertising and data tracking behaviour because it’s problematic.

We do not need to setup a separate shadow economy to gatekeep what is and isn’t worth spending eoectricity on.

- Power consumption is still power consumption, so 2 290 000kgCo2 is a lot, even if it’s way lower than what it would have been with coal plants

- They only talk about power consumption and not server hardware footprint, cause power consumption is the easier to offset

- Yes

-

development of this model over the years required X TWh of power

This part is kind of hard to measure. When do you start counting? From the first work that informed the research direction eventually leading to this model? From the point where the concept of this final model first came about? Do you split the energy usage between multiple models that came from the same work?

That’s something of a red herring. The source of that energy matters more than how much is used (use renewables where possible) - your ire is directed at entirely the wrong place; and also how much is used in computers and datacentres doing other stuff? If I’m generating pictures I’m not playing games, which is using the same card and probably more constantly.

I gotta congratulate you though, that’s an argument that to my knowledge was NOT levelled against photography when that was invented. I mean like all the other arguments it’s bollocks but at least it’s new! <pretty much every other argument against ai art was levelled at photography and many of therm at pre-mixed paints before that!>

by no means this is a new argument and it is not aimed at individual use cases so “If I dont ai I game” doesn’t really apply and is severely shortsighted

https://www.theguardian.com/technology/2024/mar/07/ai-climate-change-energy-disinformation-report

https://www.forbes.com/sites/arielcohen/2024/05/23/ai-is-pushing-the-world-towards-an-energy-crisis/

Perhaps do a bit of research to update your latest knowledge before writing cocky answers

Again, we need to shift towards renewables. AI is not the problem you’re angry with here, stop railing against new technology and new artistic media and start railing against oil companies

And by new I meant “not over a hundred years old”, not “over three months old”

you really like someone else doing your job for you:

https://penntoday.upenn.edu/news/hidden-costs-ai-impending-energy-and-resource-strain

https://www.numenta.com/blog/2022/05/24/ai-is-harming-our-planet/

(discalaimer: this is just to argue that these arguments are almost as old chatgpt, I am not endorsing any of the articles above and have not read all)

In a world that is in an energy crisis and cant still produce enough renewable energy to replace most of its non renewables, I am not going to rail new tech but I will rail against billionaires who try to abuse ownership of a new tech to gain more money with questionable returns compared to damage its causing. I am going to note again that this is not railing/criticism against all use cases and development of AI. But for a minute fraction of compute requirements of AI platforms like chatgpt, academics can probably come up with AI/machine learning algorithms that can maybe optimise energy usage and distribution.

AI search is great.

The more “searchey”, and less “generativey”, the better. What goes against the direction every provider is going, but it’s still great.

I like using perplexity because the ads aren’t in your face and it’s pretty good at providing concise answers… And it doesn’t fuck with my news feed every time I look up some random thing

It’s really god to search things that you don’t know the name already.